The Essential Role of Camera Calibration and Lens Correction in Enhancing Multi-Channel Fusion

In today’s technologically advanced era, the integration of video surveillance with geographic mapping systems has opened up new vistas for security, urban planning, and numerous other applications. Multi-Channel Fusion stands at the forefront of this integration, offering a seamless way to accurately combine CCTV video footage with an overhead photomap. This revolutionary capability not only enhances the accuracy of geographic matching but also paves the way for a plethora of real-time applications. However, the backbone of achieving such precision and clarity in video playback lies in two critical processes: camera calibration and lens correction.

Camera Calibration: The Foundation of Precision

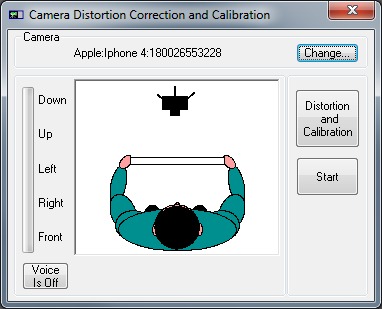

Camera calibration is a fundamental step in ensuring that the video captured by CCTV cameras can be accurately matched with geographic locations on an overhead photomap. This process involves measuring and recording the intrinsic and extrinsic parameters of the camera’s lens. Intrinsic parameters relate to the camera’s internal characteristics, such as focal length, principal point, and lens distortion. Extrinsic parameters, on the other hand, deal with the camera’s position and orientation in the world.

By accurately calibrating a camera, software solutions like VideoActive modules can precisely understand how images and videos captured by the camera correspond to real-world coordinates. This is especially crucial when creating a user-defined processing pipeline from live sources or locally stored files, ensuring that every pixel in the video can be accurately mapped to a geographic location.

Lens Correction: Enhancing Visual Accuracy

While camera calibration lays the groundwork for accurate geographic matching, lens correction is equally important in refining the visual output. Lenses, by their nature, introduce various distortions to the captured image, such as barrel or pincushion distortion, which can significantly skew the accuracy of the resultant video when overlayed on a photomap.

Lens correction algorithms are designed to rectify these distortions, ensuring that the final video output presents a true-to-life representation of the scene. This is particularly vital when dealing with larger size files, such as 4K and 8K videos. As the resolution of video increases, the impact of lens distortion can become more pronounced, making lens correction an indispensable part of the video processing pipeline.

Leveraging 64-Bit Architecture for Enhanced Performance

The shift towards entirely re-written software code for 64-bit software architecture marks a significant leap in improving the processing capabilities for camera calibration and lens correction. This architectural upgrade allows for the efficient handling of larger size files and speeds up the execution of complex algorithms involved in calibrating cameras and correcting lens distortions. Consequently, users can open, play, and save high-resolution videos with unprecedented speed and accuracy, all in real-time.

Conclusion

The synergy between camera calibration and lens correction is the linchpin in achieving the high level of precision required for successful Multi-Channel Fusion. As we continue to push the boundaries of what’s possible with video surveillance and geographic mapping integration, the importance of these processes cannot be overstated. By ensuring that every video frame is accurately calibrated and free from lens distortions, we can unlock the full potential of this technology, providing tools that are not only powerful but also remarkably accurate and efficient.